When You Outsource Your Thinking to AI

When technology replaces human judgement instead of supporting it

A manager once made a $5,000 decision based on a single ChatGPT answer.

It started with an email.

Within minutes, the email progressed into an urgent meeting.

The team had historically shared a $5,000 conference ticket amongst several staff members so that more people could attend and benefit from the learning opportunity.

But suddenly, there was concern. The manager explained that sharing this ticket might breach conference rules and could result in penalties for non-compliance and reputational risk to the organisation.

The conclusion came quickly: the team could no longer share the ticket.

And then something interesting happened.

I raised a simple but respectful question.

“Why has this suddenly become an issue now?”

As I continued probing the reasoning behind the decision, almost like applying the 5 Why’s framework, the root cause gradually became clear.

Out of momentary panic, the manager had asked ChatGPT whether sharing the ticket might breach compliance rules.

AI responded cautiously.

It suggested such arrangements may violate conference policies and recommended avoiding the risk altogether.

So the manager did what many professionals are now doing.

They treated the answer as truth without testing it against reality.

No one had verified this interpretation directly with the conference organisers.

When AI replaces thinking

No one asked another question. Everyone in the room accepted the conclusion.

This is one of the subtle risks emerging in an AI-enabled world.

AI generates responses that sound confident, even when they are simply probabilistic predictions based on patterns in data.

When people are busy or under pressure, confident language can easily be mistaken for certainty. This is often the moment thinking quietly disappears.

Instead of asking:

“How do we know this is true?”

People start asking:

“What does AI say?”

If this resonates, you might enjoy Let’s Get Clear.

I write about how to cut through complexity, question assumptions, and build clarity in an increasingly noisy world.

Subscribe to receive future insights, frameworks, and reflections. there will be more coming.

Trust your intuition when something doesn’t sound right

As I sat in that meeting, something didn’t quite add up.

Not because anyone had bad intentions, but because the reasoning felt incomplete.

Often when something doesn’t sound right, it’s because an assumption is hiding somewhere in the logic.

Learning to trust that instinct is an important professional skill. Experienced professionals often recognise this instinct: if something doesn’t sound right, it usually isn’t.

The best way to do this is to keep asking questions to get better clarity on the root cause of a problem. Following this long-held view of mine, I asked another question during this meeting:

“Why don’t we simply ask the conference organisers if they can accommodate our situation?”

The reaction was immediate.

“They won’t allow it.”

“It’s obvious.”

“AI already checked.”

But clarity doesn’t come from assumptions or taking the word of a robo-advisor. It comes from verifying the facts for yourself.

Eventually the organisers were contacted and the answer came back quickly. Sharing the ticket was completely permitted.

Nothing illegal.

Nothing non-compliant.

No issue at all — except for the time wasted discussing a non-problem.

The lesson? Never assume an AI-generated answer is the same thing as a confirmed fact in the real world.

The hidden cognitive cost of outsourcing thinking

This story isn’t really about conference tickets.

It highlights a growing habit across modern organisations.

We are increasingly outsourcing judgement to technology.

Researchers refer to this as cognitive offloading — relying on external tools to reduce the mental effort of solving problems.

Sometimes that’s helpful if it automates trivial tasks so that you can focus on high-risk priorities.

But if used constantly as an advisor or oracle, over time it can weaken our ability to reason independently.

For example, studies on navigation show that heavy reliance on GPS is associated with weaker spatial memory because the brain’s navigation system, the hippocampus, is used less when technology performs the task.

In simple terms, when tools do the thinking long enough, the brain stops practising. It’s not very different from what happens when you stop going to the gym.

Use the muscle less and it weakens.

Don’t believe me? Even AI says the same thing

At this point, you might reasonably ask:

“Surely AI can guide our judgement if it has access to more information than we do?”

So I tested that assumption.

I asked two different AI systems a simple question:

“Should humans use AI to replace their intuition and judgement which is based on real-world experience?”

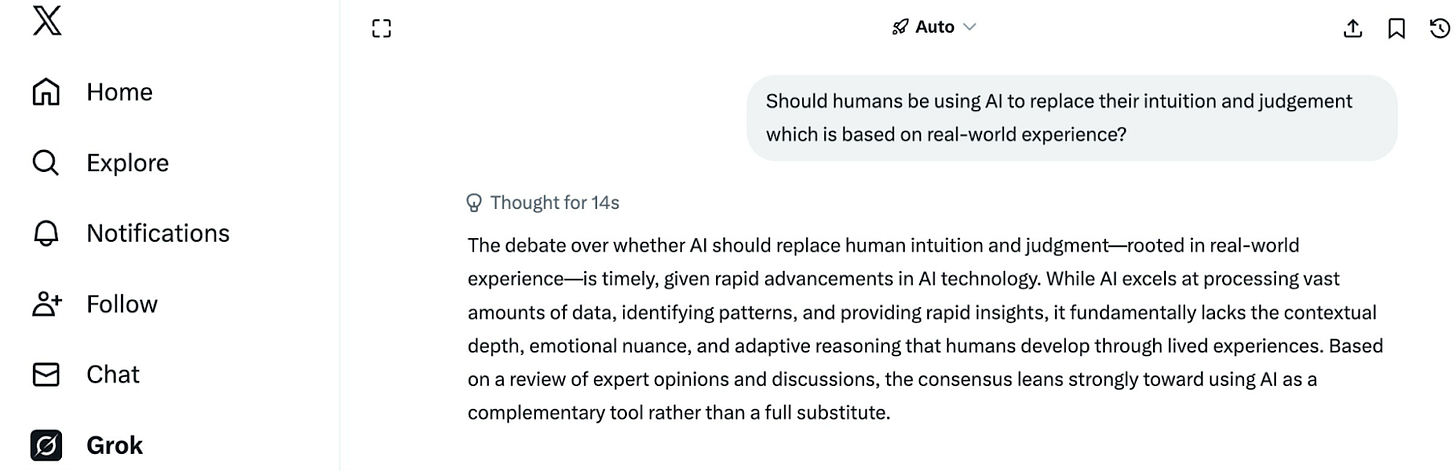

Grok’s Response

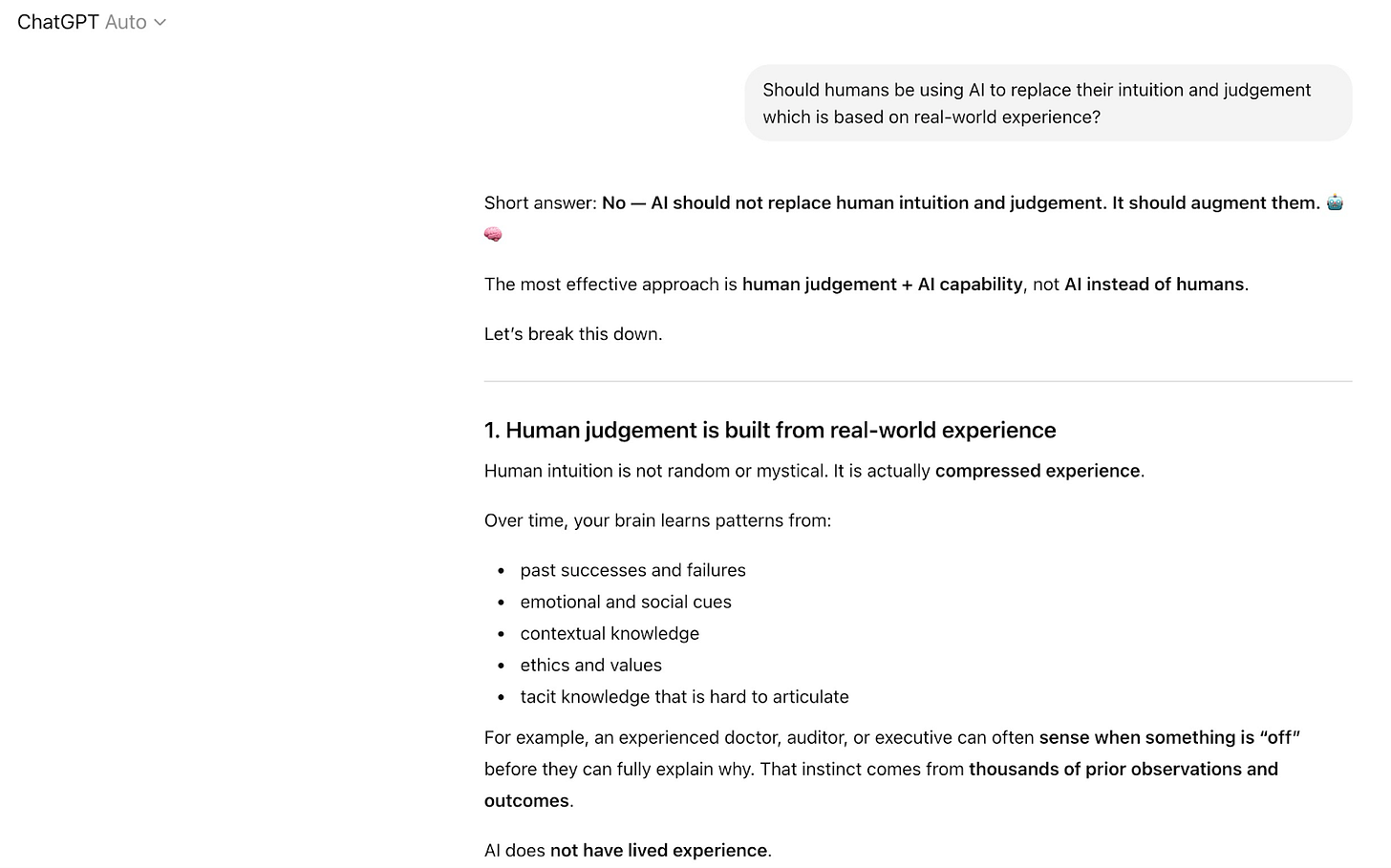

ChatGPT’s Response

Both systems gave essentially the same answer.

No.

AI should augment human judgement, not replace it. This is because AI does not have lived experience, which is required when making pragmatic decisions.

Even the technology itself is effectively telling us:

Use me as a tool.

Don’t replace your thinking with me.

And yet in many workplaces the opposite is happening.

AI is not the problem

Let’s be clear.

AI is one of the most powerful technologies ever created.

But tools have always expanded human capability, not replaced human judgement.

When Excel emerged in the 1980s, many feared it would eliminate accountants. Instead, it removed manual calculations and allowed professionals to focus on analysis, insight and decision-making.

AI is likely to function the same way: as a thinking accelerator.

When you’re the only person questioning the room

During the infamous conference ticket discussion, I was the only person asking questions.

Moments like this test something deeper than intelligence.

They test your emotional discipline when attempting to influence an outcome.

When everyone disagrees with you, your instinct is either to push harder, win the debate, or not say anything at all.

But real, positive influence comes from calm curiosity.

People listen when they sense someone genuinely trying to understand the situation.

So instead of arguing, I kept returning to the same simple point:

“What do we have to lose by asking the conference organisers?”

The answer was simple.

Nothing.

Three questions to protect your thinking

AI is incredibly useful.

But its output should always be tested against the real-world context you are operating in.

If you want to critically analyse an AI response before relying on it, ask yourself these three questions:

1. The Evidence-Based Question

Did AI reference specific rules, sources, or facts relevant to the situation or did it generate general guidance without context?

2. The Verification Question

Can I verify this response directly against a reliable source, person, or real-world circumstance? What might AI be missing here?

3. The Consequence Question

If AI’s assumptions behind its response are wrong, what could relying on it cost me?

These questions create a simple discipline:

AI can generate an answer in seconds.

Your job is to test whether it is true in context.

The real skill of the AI age

The most valuable professionals in the next decade will not be the ones who use AI the most.

They will be the ones who think most clearly when AI is wrong.

Because AI can generate answers, but it cannot take responsibility for decisions.

Humans still carry that burden.

And sometimes clarity arrives in the simplest possible form: a question no one thought to ask.

Let’s Get Clear

In an overloaded world, technology will keep delivering answers faster than we can evaluate them.

But clarity does not come from speed.

It comes from curiosity.

The next time a confident answer appears, whether from AI or from the room, pause and ask:

How do we know for sure?

AI can assist us in building clarity.

But it should never become the foundation of our thinking.

That responsibility still belongs to us.

Because assumptions sound convincing.

Until someone tests reality.

If this article made you pause and think, that’s exactly the purpose of Let’s Get Clear.

Each piece explores how to navigate complexity, question assumptions, and build clarity in a fast-moving world.

If you’d like to receive future articles, frameworks, and lifelong thinking tools directly, you’re welcome to subscribe below. We also have more coming soon for our loyal subscribers.

What scares me is when you ask AI that question and its response is “yes”… I think that day will be coming sooner than most think.